Masks Reveal More Than They Hide

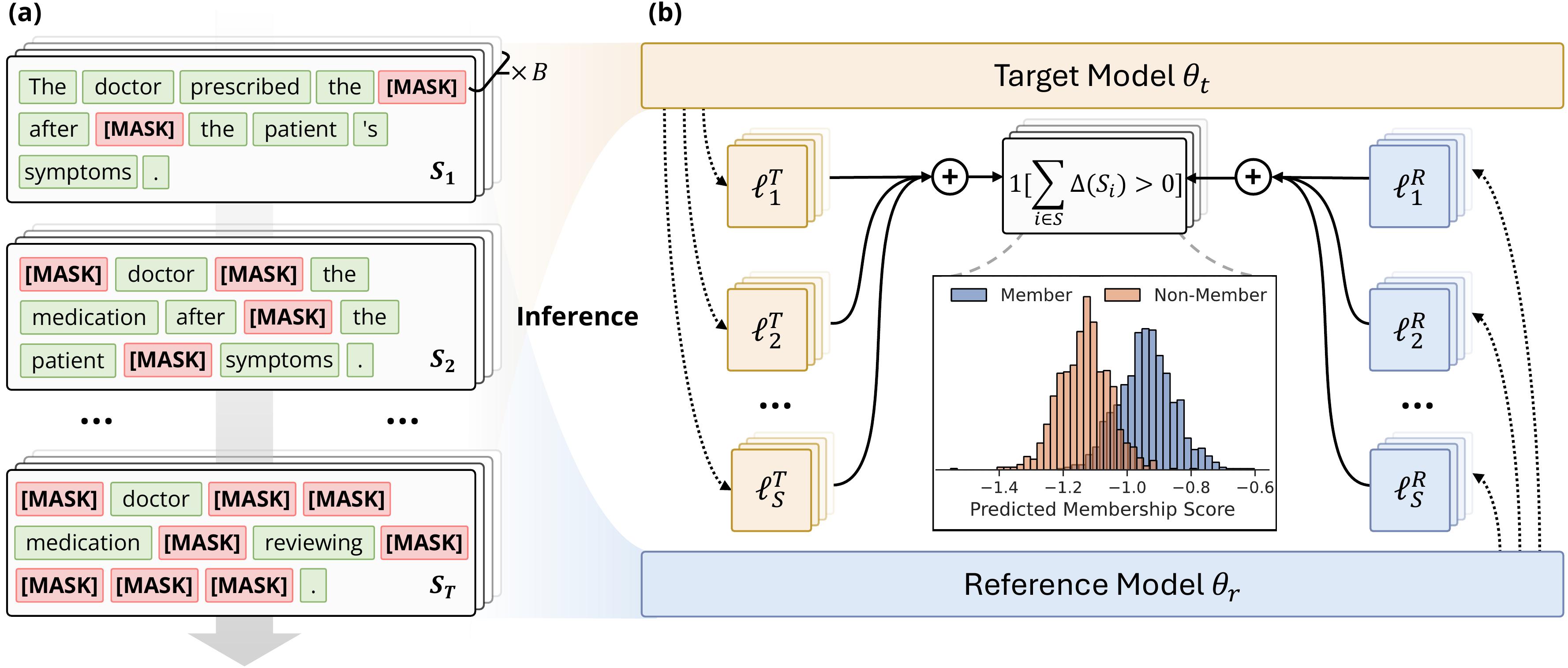

A walkthrough of our ICLR 2026 paper Membership Inference Attacks Against Fine-tuned Diffusion Language Models

Introduction

Diffusion Language Models (DLMs) are emerging as a serious alternative to the autoregressive paradigm. Models like LLaDA

In this paper

Why DLMs Change the Game

One Signal vs. 2L Signals

In an autoregressive model (ARM), each token is predicted from its left context only. The membership signal for a sequence $\mathbf{x}$ of length $L$ is:

\[\Delta_{\text{AR}}(\mathbf{x}) = \frac{1}{L}\sum_{i=1}^{L} \left[\ell^{\text{R}}_i - \ell^{\text{T}}_i\right], \quad \ell_i = -\log p(x_i \mid x_{\lt i})\]One sequence, one fixed signal. The autoregressive factorization dictates everything; there is no freedom in how to probe.

DLMs break this constraint. A DLM predicts masked tokens conditioned on all unmasked tokens, so the signal depends on which positions are masked:

\[\Delta_{\text{DF}}(\mathbf{x}; \mathcal{S}) = \frac{1}{|\mathcal{S}|}\sum_{i \in \mathcal{S}} \left[\ell^{\text{R}}_i(\mathbf{x}, \mathcal{S}) - \ell^{\text{T}}_i(\mathbf{x}, \mathcal{S})\right]\]where $\mathcal{S} \subseteq [L]$ is the set of masked positions. Since $\mathcal{S}$ can be any subset of $[L]$, the attacker has up to $2^L$ possible mask configurations to try, each an independent experiment that can reveal different memorization patterns.

Consider tokens $x_i$ and $x_j$ with a relationship memorized during fine-tuning. In an ARM, if $j > i$, the backward dependency is always invisible. In a DLM, you can mask just $x_i$, just $x_j$, or both simultaneously. Each configuration probes a different facet of what the model remembers.

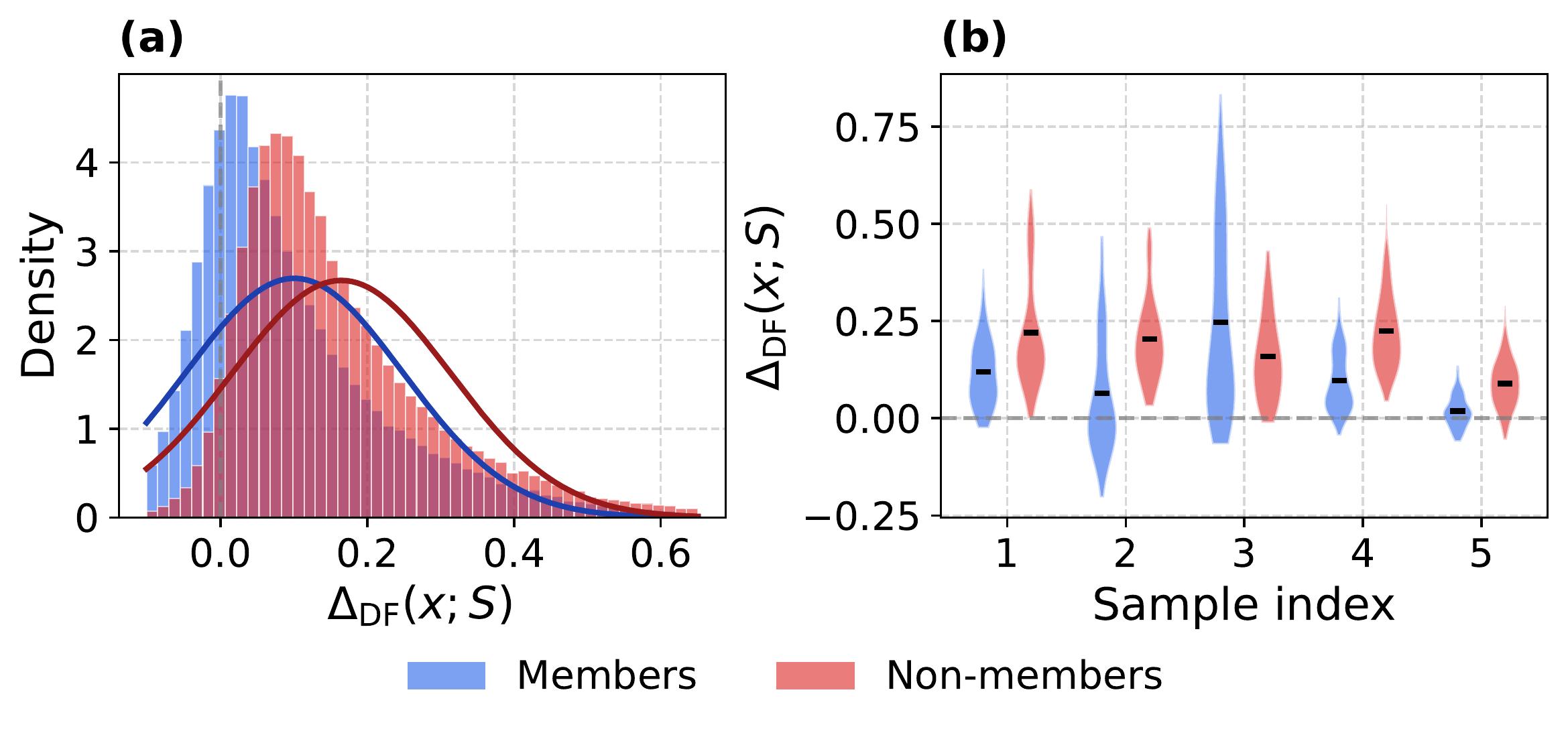

The Sparsity Problem

This multiplicity is both a gift and a curse. The catch: most mask configurations reveal nothing. Memorization is configuration-specific: only certain patterns activate the token relationships learned during fine-tuning. When we evaluated 100 random mask configurations per sample on LLaDA-8B, the intra-sample variance in signal strength ($\sigma \approx 0.10$) exceeded the average member/non-member margin ($\delta \approx 0.06$). A single random mask is statistically likely to miss the signal entirely.

Making matters worse, the tokens with the largest loss differences are often the worst membership indicators. Domain-specific tokens like “Background” or “Biography” show enormous loss reductions after fine-tuning, but they do so equally for members and non-members. These are domain adaptation artifacts, not memorization signals. Global averaging lets these outliers drown out the subtle, consistent shifts that actually indicate membership.

The following chart illustrates this: we compare the magnitude of mean loss difference against the actual discriminative value (Signal Strength $\approx 1.0$ means no discriminative power). The tokens that look most “interesting” by magnitude are precisely the least useful.

{

"tooltip": {

"trigger": "axis",

"axisPointer": { "type": "cross" }

},

"legend": { "top": 0 },

"grid": {

"left": "8%",

"right": "8%",

"bottom": "4%",

"top": "14%",

"containLabel": true

},

"xAxis": {

"type": "category",

"data": ["Biography", "Background", "Life", "List", "Description", "Joseph"],

"axisLabel": {

"fontStyle": "italic"

}

},

"yAxis": [

{

"type": "value",

"name": "Mean Loss Diff",

"min": 0,

"max": 1.2,

"position": "left"

},

{

"type": "value",

"name": "Signal Strength",

"min": 0,

"max": 1.2,

"position": "right",

"splitLine": { "show": false }

}

],

"animationDuration": 1200,

"series": [

{

"name": "Mean Loss Diff",

"type": "bar",

"data": [1.040, 0.671, 0.631, 0.544, 0.399, 0.393],

"itemStyle": { "color": "#7209b7", "borderRadius": [3, 3, 0, 0] },

"barWidth": "40%"

},

{

"name": "Signal Strength (1.0 = useless)",

"type": "line",

"yAxisIndex": 1,

"data": [0.705, 0.973, 0.701, 0.501, 0.526, 0.978],

"lineStyle": { "color": "#e76f51", "width": 3 },

"itemStyle": { "color": "#e76f51", "borderWidth": 2 },

"symbol": "circle",

"symbolSize": 10,

"markLine": {

"silent": true,

"lineStyle": { "type": "dashed", "color": "#999" },

"data": [{ "yAxis": 1.0, "label": { "show": true, "position": "insideEndTop" } }]

}

}

]

}

This is why naive averaging, the approach used by every existing MIA baseline, fails catastrophically on DLMs. We need a fundamentally different aggregation strategy.

SAMA: Subset-Aggregated Membership Attack

SAMA’s design flows from three observations: signals are sparse, noise is heavy-tailed, and different masking densities reveal different patterns. Each observation motivates one component.

Step 1: Sample and Compare Locally

At each masking step $t$ with mask $\mathcal{S}_t$ containing $k_t$ masked positions, we don’t average all $k_t$ token losses. Instead, we draw $N$ random subsets $\mathcal{U}^n \subset \mathcal{S}_t$ of size $m \ll k_t$ and compute a localized loss difference per subset:

\[\Delta^n_{\text{DF}}(\mathbf{x}; \mathcal{S}_t) = \frac{1}{m}\sum_{i \in \mathcal{U}^n} \left[\ell^{\text{R}}_i(\mathbf{x}, \mathcal{S}_t) - \ell^{\text{T}}_i(\mathbf{x}, \mathcal{S}_t)\right]\]Each subset excludes different tokens, so a domain-specific outlier that dominates one subset’s signal appears in only a fraction of the $N$ measurements. Consistent membership signals, on the other hand, accumulate across most subsets.

Step 2: Vote, Don’t Average

Rather than using the magnitude of each $\Delta^n$, we reduce it to a single bit:

\[B^n(\mathbf{x}) = \mathbf{1}\!\left[\Delta^n_{\text{DF}}(\mathbf{x}; \mathcal{S}_t) > 0\right]\]For non-members, the target and reference models behave similarly on unseen data. The loss difference is pure noise, sometimes positive, sometimes negative, so $B^n = 1$ with probability $\approx 0.5$, regardless of the noise distribution, even if it has infinite variance.

For members, configurations that activate memorization consistently push $\Delta^n$ positive, biasing $B^n$ toward 1. Aggregating across $N$ subsets:

\[\hat{\beta}_t(\mathbf{x}) = \frac{1}{N}\sum_{n=1}^{N} B^n(\mathbf{x})\]Non-members cluster around 0.5; members shift detectably above it. The sign test has a breakdown point of 0.5: up to half the subsets can be arbitrarily corrupted without affecting the result, versus $1/n$ for the mean.

Step 3: Sweep Across Densities

Different masking densities probe the model at different scales. Sparse masks (few tokens masked) give strong per-token signals but fewer data points; dense masks give weaker signals but more aggregation opportunities. SAMA linearly sweeps masking density from $\alpha_{\min}$ to $\alpha_{\max}$ across $T$ steps, then combines them with inverse-step weights that prioritize the cleaner early (sparse) steps:

\[\text{SAMA}(\mathbf{x}) = \sum_{t=1}^{T} w_t \, \hat{\beta}_t(\mathbf{x}), \qquad w_t = \frac{1/t}{\sum_{i=1}^{T} 1/i}\]The final score is bounded in $[0, 1]$, requires no dataset-specific tuning, and transforms sparse signal detection into a robust voting mechanism.

Results

We evaluate SAMA on LLaDA-8B

Baselines Are Nearly Blind

The chart below shows AUC performance on six MIMIR datasets (LLaDA-8B). Toggle methods via the legend.

{

"tooltip": {

"trigger": "axis",

"axisPointer": { "type": "shadow" }

},

"legend": {

"top": 0,

"textStyle": { "fontSize": 11 },

"selected": {

"Loss": false,

"ZLIB": false,

"Lowercase": false,

"Min-K%": false,

"Min-K%++": false,

"BoWs": false,

"ReCall": false,

"CON-ReCall": false,

"Neighbor": false,

"Ratio": true,

"SecMI": true,

"PIA": true,

"SAMA (Ours)": true

}

},

"grid": {

"left": "4%",

"right": "4%",

"bottom": "4%",

"top": "18%",

"containLabel": true

},

"xAxis": {

"type": "category",

"data": ["ArXiv", "GitHub", "HackerNews", "PubMed Central", "Wikipedia", "Pile CC"],

"axisLabel": { "rotate": 20 }

},

"yAxis": {

"type": "value",

"name": "AUC",

"min": 0.40,

"max": 0.95

},

"animationDuration": 1500,

"animationEasing": "cubicOut",

"series": [

{

"name": "Loss",

"type": "bar",

"data": [0.506, 0.551, 0.495, 0.498, 0.495, 0.502],

"itemStyle": { "color": "#bbb" }

},

{

"name": "ZLIB",

"type": "bar",

"data": [0.490, 0.561, 0.486, 0.488, 0.495, 0.491],

"itemStyle": { "color": "#ccc" }

},

{

"name": "Lowercase",

"type": "bar",

"data": [0.515, 0.579, 0.483, 0.502, 0.535, 0.518],

"itemStyle": { "color": "#aaa" }

},

{

"name": "Min-K%",

"type": "bar",

"data": [0.488, 0.530, 0.492, 0.500, 0.482, 0.491],

"itemStyle": { "color": "#d4a574" }

},

{

"name": "Min-K%++",

"type": "bar",

"data": [0.485, 0.496, 0.486, 0.494, 0.488, 0.491],

"itemStyle": { "color": "#c49464" }

},

{

"name": "BoWs",

"type": "bar",

"data": [0.519, 0.656, 0.527, 0.489, 0.471, 0.480],

"itemStyle": { "color": "#ddd" }

},

{

"name": "ReCall",

"type": "bar",

"data": [0.501, 0.562, 0.494, 0.495, 0.506, 0.500],

"itemStyle": { "color": "#9bc4cb" }

},

{

"name": "CON-ReCall",

"type": "bar",

"data": [0.500, 0.549, 0.501, 0.498, 0.498, 0.498],

"itemStyle": { "color": "#7ba7b0" }

},

{

"name": "Neighbor",

"type": "bar",

"data": [0.506, 0.478, 0.520, 0.506, 0.504, 0.505],

"itemStyle": { "color": "#a0a0a0" }

},

{

"name": "Ratio",

"type": "bar",

"data": [0.597, 0.743, 0.575, 0.555, 0.653, 0.604],

"itemStyle": { "color": "#e76f51" }

},

{

"name": "SecMI",

"type": "bar",

"data": [0.520, 0.604, 0.523, 0.510, 0.522, 0.515],

"itemStyle": { "color": "#f4a261" }

},

{

"name": "PIA",

"type": "bar",

"data": [0.525, 0.571, 0.494, 0.496, 0.522, 0.509],

"itemStyle": { "color": "#e9c46a" }

},

{

"name": "SAMA (Ours)",

"type": "bar",

"data": [0.850, 0.876, 0.657, 0.814, 0.790, 0.778],

"itemStyle": {

"color": "#7209b7",

"borderRadius": [3, 3, 0, 0]

}

}

]

}

Most existing methods hover near random chance (AUC $\approx$ 0.5). Even diffusion-specific methods like SecMI and PIA barely crack 0.52. The best autoregressive baseline, Ratio

Where It Matters Most

AUC tells part of the story. In practice, privacy auditing operates at very low false positive rates: you need high confidence before flagging a sample as a training member. The next chart shows TPR@1%FPR, the fraction of members correctly identified while only misclassifying 1% of non-members. Toggle methods via the legend.

{

"tooltip": {

"trigger": "axis",

"axisPointer": { "type": "shadow" }

},

"legend": {

"top": 0,

"textStyle": { "fontSize": 11 },

"selected": {

"Loss": false,

"ZLIB": false,

"Lowercase": false,

"Min-K%": false,

"Min-K%++": false,

"BoWs": false,

"ReCall": false,

"CON-ReCall": false,

"Neighbor": false,

"Ratio": true,

"SecMI": true,

"PIA": true,

"SAMA (Ours)": true

}

},

"grid": {

"left": "4%",

"right": "4%",

"bottom": "4%",

"top": "18%",

"containLabel": true

},

"xAxis": {

"type": "category",

"data": ["ArXiv", "GitHub", "HackerNews", "PubMed Central", "Wikipedia", "Pile CC"],

"axisLabel": { "rotate": 20 }

},

"yAxis": {

"type": "value",

"name": "TPR@1%FPR (%)",

"min": 0

},

"animationDuration": 1500,

"animationEasing": "cubicOut",

"series": [

{

"name": "Loss",

"type": "bar",

"data": [1.0, 3.6, 1.0, 1.6, 1.0, 1.2],

"itemStyle": { "color": "#bbb" }

},

{

"name": "ZLIB",

"type": "bar",

"data": [1.2, 4.5, 0.9, 0.5, 0.8, 0.7],

"itemStyle": { "color": "#ccc" }

},

{

"name": "Lowercase",

"type": "bar",

"data": [1.3, 4.4, 0.7, 0.2, 1.3, 0.9],

"itemStyle": { "color": "#aaa" }

},

{

"name": "Min-K%",

"type": "bar",

"data": [1.2, 3.9, 1.3, 0.8, 0.8, 0.8],

"itemStyle": { "color": "#d4a574" }

},

{

"name": "Min-K%++",

"type": "bar",

"data": [0.6, 1.6, 0.8, 1.1, 0.4, 0.7],

"itemStyle": { "color": "#c49464" }

},

{

"name": "BoWs",

"type": "bar",

"data": [1.1, 15.4, 0.9, 0.2, 0.3, 0.3],

"itemStyle": { "color": "#ddd" }

},

{

"name": "ReCall",

"type": "bar",

"data": [0.7, 3.7, 1.0, 0.7, 1.0, 0.9],

"itemStyle": { "color": "#9bc4cb" }

},

{

"name": "CON-ReCall",

"type": "bar",

"data": [1.1, 2.7, 1.5, 0.9, 0.8, 0.9],

"itemStyle": { "color": "#7ba7b0" }

},

{

"name": "Neighbor",

"type": "bar",

"data": [1.2, 0.8, 0.9, 1.1, 0.9, 1.0],

"itemStyle": { "color": "#a0a0a0" }

},

{

"name": "Ratio",

"type": "bar",

"data": [2.3, 8.1, 1.3, 1.8, 1.1, 1.5],

"itemStyle": { "color": "#e76f51" }

},

{

"name": "SecMI",

"type": "bar",

"data": [0.6, 4.4, 1.3, 0.9, 1.5, 1.0],

"itemStyle": { "color": "#f4a261" }

},

{

"name": "PIA",

"type": "bar",

"data": [1.1, 1.2, 1.4, 0.5, 1.1, 0.9],

"itemStyle": { "color": "#e9c46a" }

},

{

"name": "SAMA (Ours)",

"type": "bar",

"data": [17.8, 25.9, 2.7, 13.2, 13.6, 11.5],

"itemStyle": {

"color": "#7209b7",

"borderRadius": [3, 3, 0, 0]

}

}

]

}

On GitHub, SAMA achieves a TPR@1%FPR of 25.9% compared to 8.1% for Ratio, a 3$\times$ improvement. On ArXiv, the gap is nearly 8$\times$ (17.8% vs. 2.3%). These numbers matter because real-world privacy auditing typically operates at exactly these strict thresholds.

Are DLMs Inherently More Vulnerable?

A natural follow-up: is this just about SAMA being a better attack, or are DLMs structurally more vulnerable than autoregressive models? To answer this, we fine-tuned LLaDA-8B (diffusion) and Llama-2-7B (autoregressive) on the same ArXiv data with matching hyperparameters and comparable utility (perplexity 9.3 vs. 8.8). We then ran standard, model-agnostic MIA baselines on both, with identical attack code, data, and evaluation.

{

"tooltip": {

"trigger": "axis",

"axisPointer": { "type": "shadow" }

},

"legend": {

"top": 0,

"data": ["LLaDA-8B (Diffusion)", "Llama-2-7B (Autoregressive)"]

},

"grid": {

"left": "4%",

"right": "4%",

"bottom": "4%",

"top": "14%",

"containLabel": true

},

"xAxis": {

"type": "category",

"data": ["Loss", "ZLIB", "Lowercase", "Min-K%", "ReCall"],

"axisLabel": { "fontSize": 12 }

},

"yAxis": {

"type": "value",

"name": "AUC",

"min": 0.45,

"max": 0.70

},

"animationDuration": 1500,

"animationEasing": "cubicOut",

"series": [

{

"name": "LLaDA-8B (Diffusion)",

"type": "bar",

"barGap": "10%",

"data": [0.563, 0.549, 0.573, 0.548, 0.562],

"itemStyle": { "color": "#7209b7", "borderRadius": [3, 3, 0, 0] },

"label": {

"show": true,

"position": "top",

"fontSize": 10,

"formatter": "{c}"

}

},

{

"name": "Llama-2-7B (Autoregressive)",

"type": "bar",

"data": [0.524, 0.517, 0.526, 0.519, 0.531],

"itemStyle": { "color": "#2a9d8f", "borderRadius": [3, 3, 0, 0] },

"label": {

"show": true,

"position": "top",

"fontSize": 10,

"formatter": "{c}"

}

}

]

}

Across every baseline, the DLM leaks more than its autoregressive counterpart, even before any DLM-specific attack is applied. The bidirectional information flow in DLMs appears to inherently expose more extractable membership signals. SAMA then amplifies this gap dramatically by exploiting the mask-configuration structure that is unique to DLMs.

Takeaways

DLMs have a qualitatively different privacy risk profile. The bidirectional masking mechanism creates an exponentially larger probing space for an attacker. This is not a minor variation on autoregressive risks; it is a fundamentally new threat surface that existing defenses were not designed for.

Existing MIA methods are nearly blind to DLM memorization. Methods designed for autoregressive models, and even those adapted from image diffusion, perform near random chance. The memorization is configuration-dependent and sparse; global averaging washes it out.

Robust aggregation is the key enabler. SAMA’s effectiveness comes from treating membership detection as rare-event estimation: sample many independent configurations, reduce each to a binary vote, and let consistent weak signals accumulate. The principle of sign-based voting under heavy-tailed noise is broadly applicable beyond DLMs.

The code and all experimental artifacts are available at https://github.com/Stry233/SAMA.

For the full details, check out the paper: Membership Inference Attacks Against Fine-tuned Diffusion Language Models (ICLR 2026).