The Signal is Local

A walkthrough of our USENIX Security 2026 paper Window-based Membership Inference Attacks Against Fine-tuned Large Language Models

Introduction

When you fine-tune a large language model on a private dataset, how much does the model remember about individual training samples? And more importantly, can someone detect whether a specific text was part of that training set?

These questions sit at the core of membership inference attacks (MIAs), a class of privacy auditing techniques that aim to determine if a given data sample was used to train a model

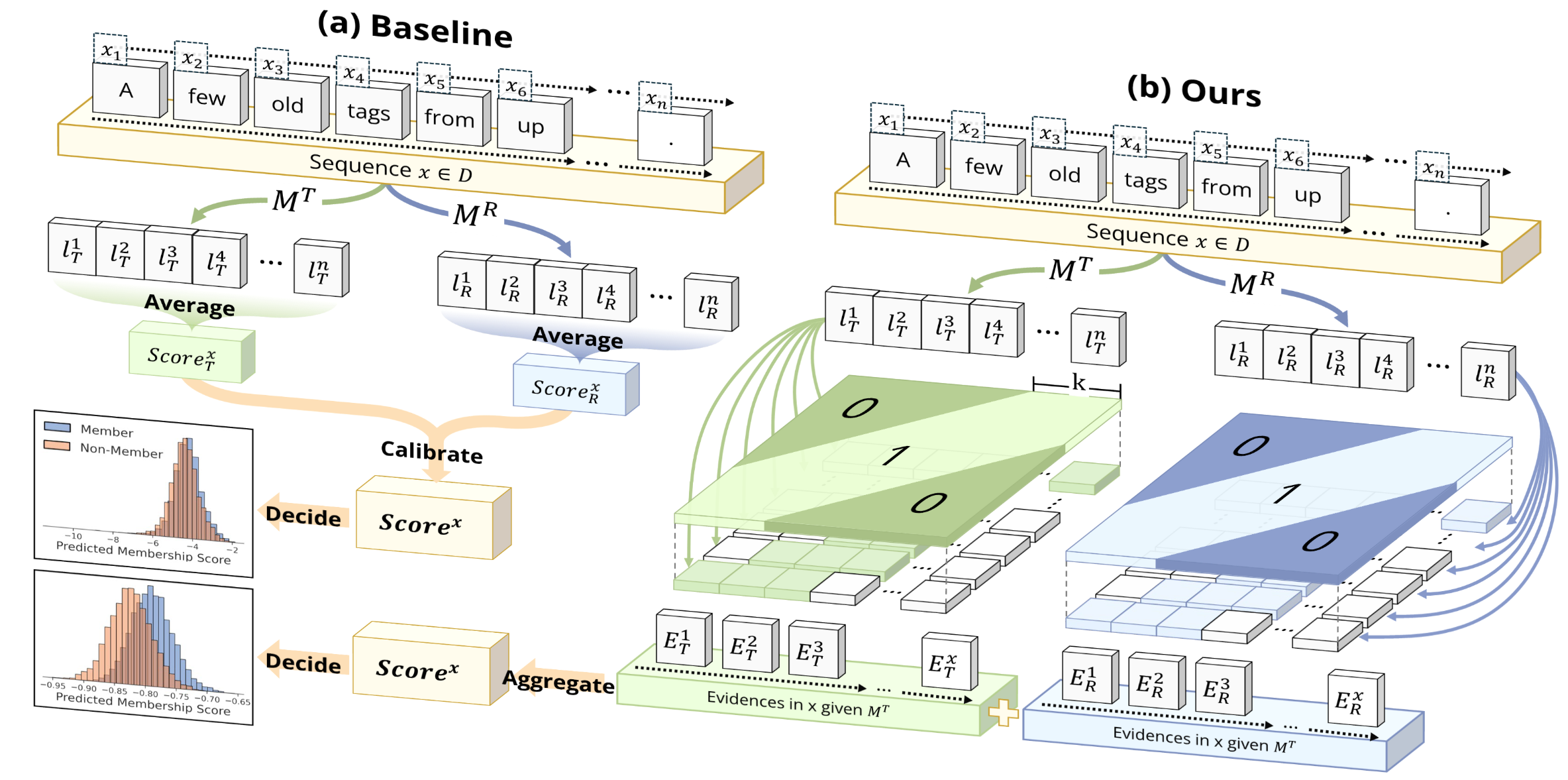

Most existing MIAs against LLMs follow a simple recipe: compute some global statistic (like average loss) over the entire text (or a group of tokens), and use it as a membership score. The assumption is that trained-on texts will, on average, have lower loss. However, this global-averaging approach fundamentally limits attack effectiveness, especially against fine-tuned LLMs where memorization signals are subtle and sparse.

In this paper

This insight led us to develop WBC (Window-Based Comparison), a membership inference attack that replaces global averaging with localized, window-based analysis. WBC slides windows of varying sizes across token loss sequences, makes binary comparisons at each position, and aggregates the results through robust voting. Across eleven datasets, WBC achieves 2–3$\times$ higher detection rates at low false positive thresholds compared to existing methods.

What’s Wrong with Global Averaging?

Extremal Events and Long-Tailed Noise

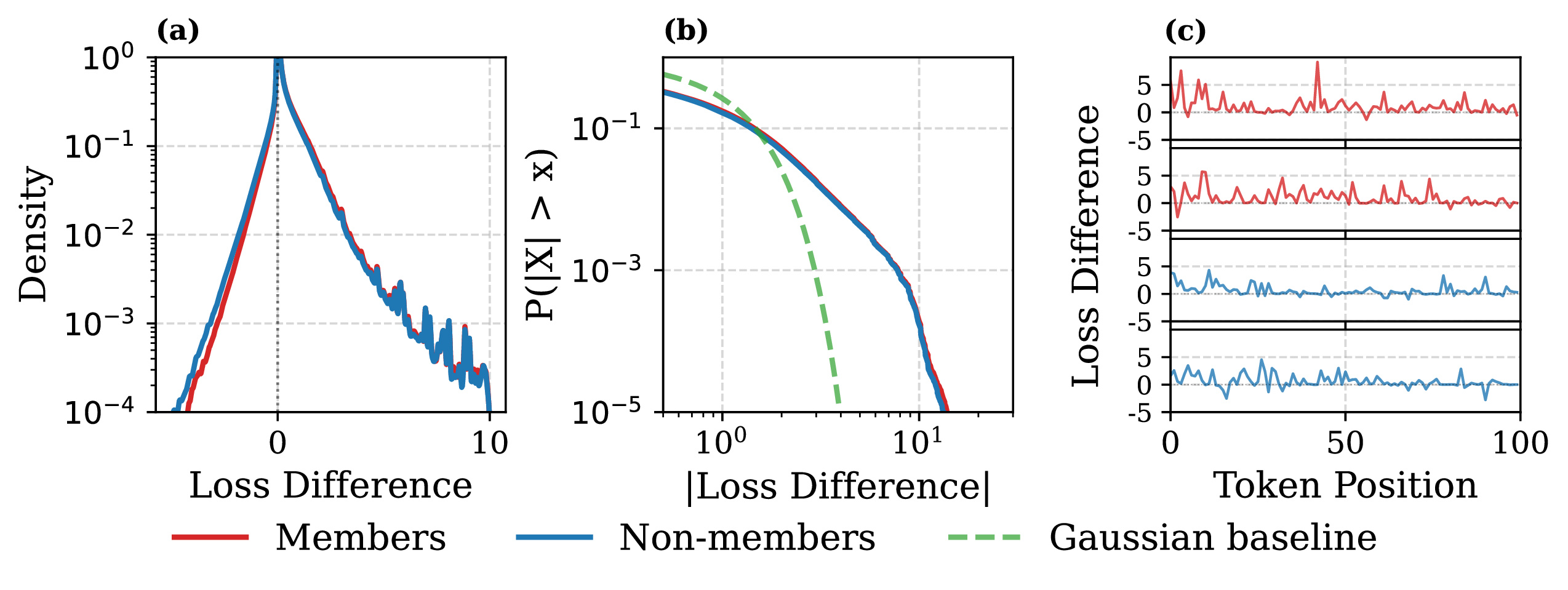

To understand why global averaging fails, we need to look at what actually happens at the token level when a model is fine-tuned. We analyzed over 10 million token-level loss comparisons from fine-tuning Pythia-2.8B

The distributions reveal three important findings:

First, both members and non-members produce similar heavy-tailed distributions with excess kurtosis exceeding 18. The distributions are not Gaussian; they have extreme outliers in both directions.

Second, the tokens where the fine-tuned model dramatically reduces loss compared to the reference (the right tail) are not reliable membership signals. These are domain-specific tokens (technical terms, formatting patterns) that the model learns regardless of whether a specific sample was in the training set.

Third, and perhaps counterintuitively, the strongest membership signals appear in the left tail: tokens where the fine-tuned model actually has higher loss than the reference model. Members show a consistent rightward shift in this region compared to non-members. The intuition here is that fine-tuning creates subtle interference patterns for training samples, causing the model to occasionally perform worse on specific tokens while performing better overall.

The Masking Effect

These observations explain exactly why global averaging fails. Consider a text with 512 tokens. Suppose 20 of those tokens carry genuine membership signals (small positive shifts in $\Delta_j = \ell_j^R - \ell_j^T$), while 5 tokens are domain-specific outliers with extreme negative values. When you average all 512 values, those 5 outliers can completely dominate the mean, drowning out the 20 informative tokens.

We formalize this through a point process model. Each per-token loss difference $\Delta_j$ is a superposition of three components:

\[\Delta_j = \delta_j(x) + \xi_j + \epsilon_j\]where $\delta_j(x)$ is the membership signal (sparse, small magnitude), $\xi_j$ is domain-specific token noise (rare but extreme magnitude), and $\epsilon_j$ is baseline noise (frequent, small magnitude). The key problem is that $\xi_j$ has heavy tails, and even a single extreme value can dominate the global mean. As the text gets longer, you get more of these rare extreme tokens, and the signal-to-noise ratio of the global mean does not improve.

The WBC Attack

Our solution is built on three design choices: sliding windows, sign-based aggregation, and geometric ensembling.

Sliding Windows

Instead of computing one global statistic, we slide a window of size $w$ across the token loss difference sequence and compute a local sum for each position:

\[S_i(w) = \sum_{j=i}^{i+w-1} \Delta_j\]For a sequence of length $n$, this produces $n - w + 1$ overlapping windows, each providing an independent local comparison between the target and reference model. The window acts as a local averaging filter that smooths out token-level noise while preserving the structure of clustered membership signals.

Sign-Based Aggregation

Given the window sums, we need to aggregate them into a final score. The naive approach is to average them (mean-based aggregation). But this re-introduces the same vulnerability to outliers that we set out to avoid: a single window containing a domain-specific outlier can dominate the mean.

Instead, we use sign-based aggregation: we simply count the fraction of windows where the reference model has higher loss than the target:

\[T_{\text{sign}}(w) = \frac{1}{n-w+1} \sum_{i=1}^{n-w+1} \mathbf{1}[S_i(w) > 0]\]This is essentially a vote: each window casts a binary ballot on whether the text looks like a member. The final score is the fraction of “yes” votes.

Why is this better than averaging? The sign test has a breakdown point of 0.5, meaning that up to half of the windows can be arbitrarily contaminated without affecting the result. In contrast, the mean has a breakdown point of $1/n$, so a single outlier can flip it. Using the Pitman Asymptotic Relative Efficiency (ARE) framework, we can show that for the contaminated, heavy-tailed distributions we observe empirically, sign-based aggregation achieves 2–5$\times$ higher statistical efficiency than the mean.

The sign-based approach also has practical advantages: it is scale-invariant (works regardless of loss magnitude), always produces scores in $[0, 1]$, and requires no dataset-specific normalization.

Geometric Ensemble

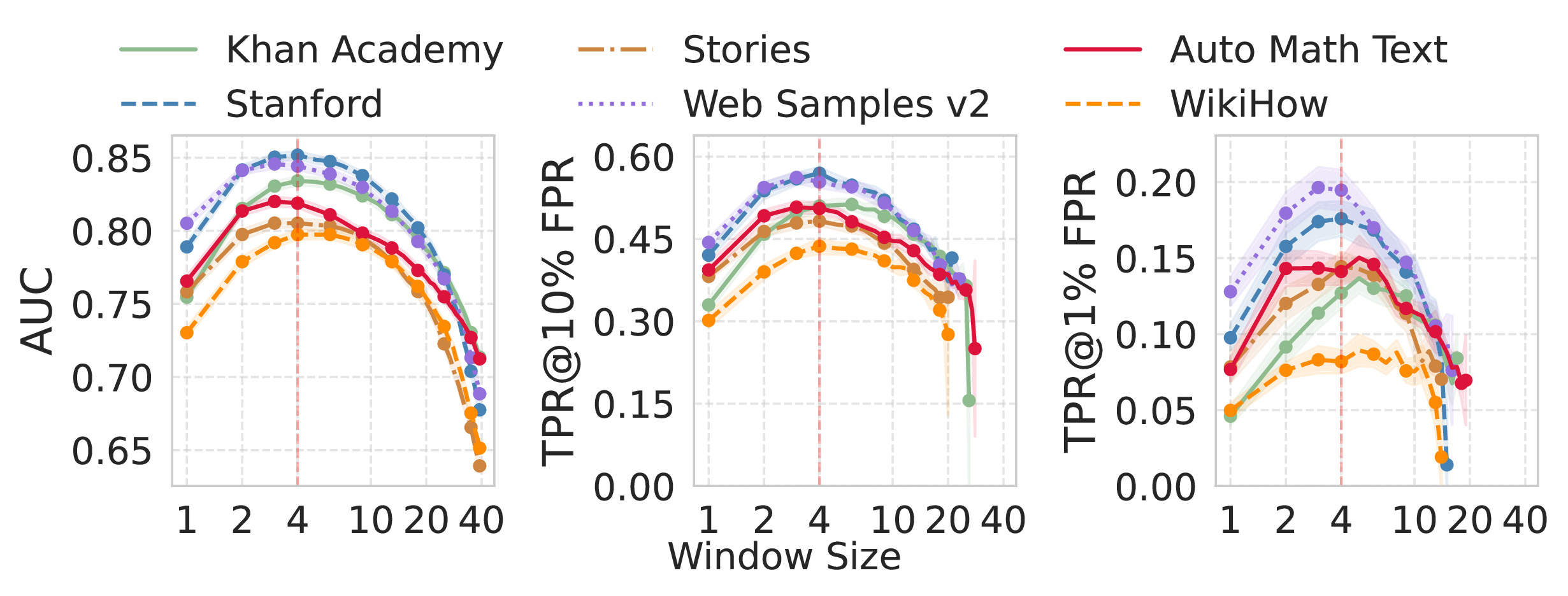

Different window sizes capture different types of memorization. Small windows (2–5 tokens) are sensitive to token-level artifacts. Larger windows (10–40 tokens) capture phrase-level and structural memorization patterns. But there is a fundamental trade-off:

- Too small: Each window has poor signal-to-noise ratio; individual tokens dominate

- Too large: Fewer independent windows, higher chance of contamination, and signal dilution when memorization is sparse

Rather than trying to pick a single optimal window size, we ensemble across a geometrically spaced set of sizes:

\[w_k = \text{round}\left(w_{\min} \cdot \left(\frac{w_{\max}}{w_{\min}}\right)^{(k-1)/(|W|-1)}\right)\]with $w_{\min} = 2$, $w_{\max} = 40$, and $|W| = 10$. This densely samples small windows (where the trade-off is most sensitive) while maintaining coverage at larger scales. The final WBC score is a uniform average:

\[S_{\text{WBC}} = \frac{1}{|W|} \sum_{k=1}^{|W|} T_{\text{sign}}(w_k)\]Experiments

Main Results

We evaluated WBC on eleven datasets spanning synthetic educational content (Cosmopedia subsets: Khan Academy, Stanford, Stories, Web Samples v2, AutoMathText, WikiHow), news (CC-News, XSum), general web text (WikiText-103), reviews (Amazon Reviews), and social media (Reddit). The target model is Pythia-2.8B fine-tuned on each dataset, with the pre-trained Pythia-2.8B as the reference.

We compared against 13 baselines including both reference-free methods (Loss

The following interactive chart shows the AUC performance across all six Cosmopedia datasets. Click on legend items to toggle methods on and off.

{

"tooltip": {

"trigger": "axis",

"axisPointer": { "type": "shadow" }

},

"legend": {

"top": 0,

"selected": {

"Loss": false,

"ZLIB": false,

"Lowercase": false,

"Min-K%": false,

"Min-K%++": false,

"BoWs": false,

"ReCall": false,

"CON-ReCall": false,

"DC-PDD": false,

"SPV-MIA": true,

"Ratio": true,

"Difference": true,

"Ensemble": true,

"WBC (Ours)": true

}

},

"grid": {

"left": "4%",

"right": "4%",

"bottom": "4%",

"top": "18%",

"containLabel": true

},

"xAxis": {

"type": "category",

"data": ["Khan Academy", "Stanford", "Stories", "Web Samples v2", "AutoMathText", "WikiHow"],

"axisLabel": { "rotate": 20 }

},

"yAxis": {

"type": "value",

"name": "AUC",

"min": 0.45,

"max": 0.90

},

"animationDuration": 1500,

"animationEasing": "cubicOut",

"series": [

{

"name": "Loss",

"type": "bar",

"data": [0.568, 0.590, 0.587, 0.579, 0.553, 0.592],

"itemStyle": { "color": "#bbb" }

},

{

"name": "ZLIB",

"type": "bar",

"data": [0.583, 0.606, 0.596, 0.593, 0.562, 0.604],

"itemStyle": { "color": "#ccc" }

},

{

"name": "Lowercase",

"type": "bar",

"data": [0.586, 0.596, 0.593, 0.592, 0.568, 0.614],

"itemStyle": { "color": "#aaa" }

},

{

"name": "Min-K%",

"type": "bar",

"data": [0.595, 0.593, 0.588, 0.587, 0.581, 0.600],

"itemStyle": { "color": "#d4a574" }

},

{

"name": "Min-K%++",

"type": "bar",

"data": [0.596, 0.592, 0.586, 0.586, 0.583, 0.598],

"itemStyle": { "color": "#c49464" }

},

{

"name": "BoWs",

"type": "bar",

"data": [0.499, 0.498, 0.502, 0.493, 0.500, 0.498],

"itemStyle": { "color": "#ddd" }

},

{

"name": "ReCall",

"type": "bar",

"data": [0.568, 0.590, 0.587, 0.579, 0.553, 0.592],

"itemStyle": { "color": "#9bc4cb" }

},

{

"name": "CON-ReCall",

"type": "bar",

"data": [0.563, 0.570, 0.558, 0.560, 0.547, 0.571],

"itemStyle": { "color": "#7ba7b0" }

},

{

"name": "DC-PDD",

"type": "bar",

"data": [0.567, 0.570, 0.574, 0.569, 0.560, 0.571],

"itemStyle": { "color": "#a0a0a0" }

},

{

"name": "SPV-MIA",

"type": "bar",

"data": [0.695, 0.760, 0.763, 0.787, 0.759, 0.719],

"itemStyle": { "color": "#f4a261" }

},

{

"name": "Ratio",

"type": "bar",

"data": [0.703, 0.781, 0.769, 0.788, 0.768, 0.714],

"itemStyle": { "color": "#e76f51" }

},

{

"name": "Difference",

"type": "bar",

"data": [0.692, 0.742, 0.719, 0.739, 0.700, 0.709],

"itemStyle": { "color": "#2a9d8f" }

},

{

"name": "Ensemble",

"type": "bar",

"data": [0.687, 0.738, 0.758, 0.786, 0.749, 0.724],

"itemStyle": { "color": "#264653" }

},

{

"name": "WBC (Ours)",

"type": "bar",

"data": [0.837, 0.854, 0.808, 0.843, 0.814, 0.802],

"itemStyle": {

"color": "#7209b7",

"borderRadius": [3, 3, 0, 0]

}

}

]

}

The TPR at low false positive rates is where the gap becomes most practically meaningful. The next chart shows TPR@1%FPR, the fraction of members correctly identified while only misclassifying 1% of non-members. Click on legend items to toggle methods.

{

"tooltip": {

"trigger": "axis",

"axisPointer": { "type": "shadow" }

},

"legend": {

"top": 0,

"selected": {

"Loss": false,

"ZLIB": false,

"Lowercase": false,

"Min-K%": false,

"Min-K%++": false,

"BoWs": false,

"ReCall": false,

"CON-ReCall": false,

"DC-PDD": false,

"SPV-MIA": true,

"Ratio": true,

"Difference": true,

"Ensemble": true,

"WBC (Ours)": true

}

},

"grid": {

"left": "4%",

"right": "4%",

"bottom": "4%",

"top": "18%",

"containLabel": true

},

"xAxis": {

"type": "category",

"data": ["Khan Academy", "Stanford", "Stories", "Web Samples v2", "AutoMathText", "WikiHow"],

"axisLabel": { "rotate": 20 }

},

"yAxis": {

"type": "value",

"name": "TPR@1%FPR (%)",

"min": 0

},

"animationDuration": 1500,

"animationEasing": "cubicOut",

"series": [

{

"name": "Loss",

"type": "bar",

"data": [1.7, 2.3, 2.0, 2.2, 1.4, 2.2],

"itemStyle": { "color": "#bbb" }

},

{

"name": "ZLIB",

"type": "bar",

"data": [1.9, 2.4, 1.9, 2.3, 1.5, 2.2],

"itemStyle": { "color": "#ccc" }

},

{

"name": "Lowercase",

"type": "bar",

"data": [1.3, 2.4, 1.8, 2.1, 1.5, 2.4],

"itemStyle": { "color": "#aaa" }

},

{

"name": "Min-K%",

"type": "bar",

"data": [2.4, 2.4, 1.9, 2.3, 2.1, 2.5],

"itemStyle": { "color": "#d4a574" }

},

{

"name": "Min-K%++",

"type": "bar",

"data": [2.2, 2.3, 1.8, 2.0, 2.2, 2.7],

"itemStyle": { "color": "#c49464" }

},

{

"name": "BoWs",

"type": "bar",

"data": [1.1, 1.2, 1.1, 1.0, 0.9, 0.8],

"itemStyle": { "color": "#ddd" }

},

{

"name": "ReCall",

"type": "bar",

"data": [1.7, 2.3, 2.0, 2.2, 1.4, 2.2],

"itemStyle": { "color": "#9bc4cb" }

},

{

"name": "CON-ReCall",

"type": "bar",

"data": [1.4, 1.8, 2.0, 1.7, 1.5, 1.9],

"itemStyle": { "color": "#7ba7b0" }

},

{

"name": "DC-PDD",

"type": "bar",

"data": [1.5, 1.4, 1.9, 1.9, 1.5, 1.6],

"itemStyle": { "color": "#a0a0a0" }

},

{

"name": "SPV-MIA",

"type": "bar",

"data": [4.9, 7.7, 7.0, 8.8, 6.3, 5.3],

"itemStyle": { "color": "#f4a261" }

},

{

"name": "Ratio",

"type": "bar",

"data": [3.7, 8.0, 9.1, 9.0, 7.5, 4.2],

"itemStyle": { "color": "#e76f51" }

},

{

"name": "Difference",

"type": "bar",

"data": [4.5, 8.0, 7.9, 9.4, 5.1, 4.7],

"itemStyle": { "color": "#2a9d8f" }

},

{

"name": "Ensemble",

"type": "bar",

"data": [6.1, 7.5, 4.8, 8.6, 5.1, 6.5],

"itemStyle": { "color": "#264653" }

},

{

"name": "WBC (Ours)",

"type": "bar",

"data": [14.6, 19.4, 16.0, 19.8, 15.3, 9.6],

"itemStyle": {

"color": "#7209b7",

"borderRadius": [3, 3, 0, 0]

}

}

]

}

WBC achieves an average AUC of 0.826 compared to 0.754 for the best baseline (Ratio). More importantly, at the practically relevant low false positive regime, WBC achieves TPR@1% FPR of 14.6% versus 5.2% for the best baseline, a 2.8$\times$ improvement.

On individual datasets, the gains are even more striking. On Khan Academy, WBC reaches a TPR@0.1% FPR of 2.6%, which is 3.7$\times$ better than the next best method. Reference-free methods generally perform near random chance (AUC around 0.6), confirming that the reference model is essential for fine-tuning MIAs.

Ablation Highlights

We ran extensive ablations to validate each design choice.

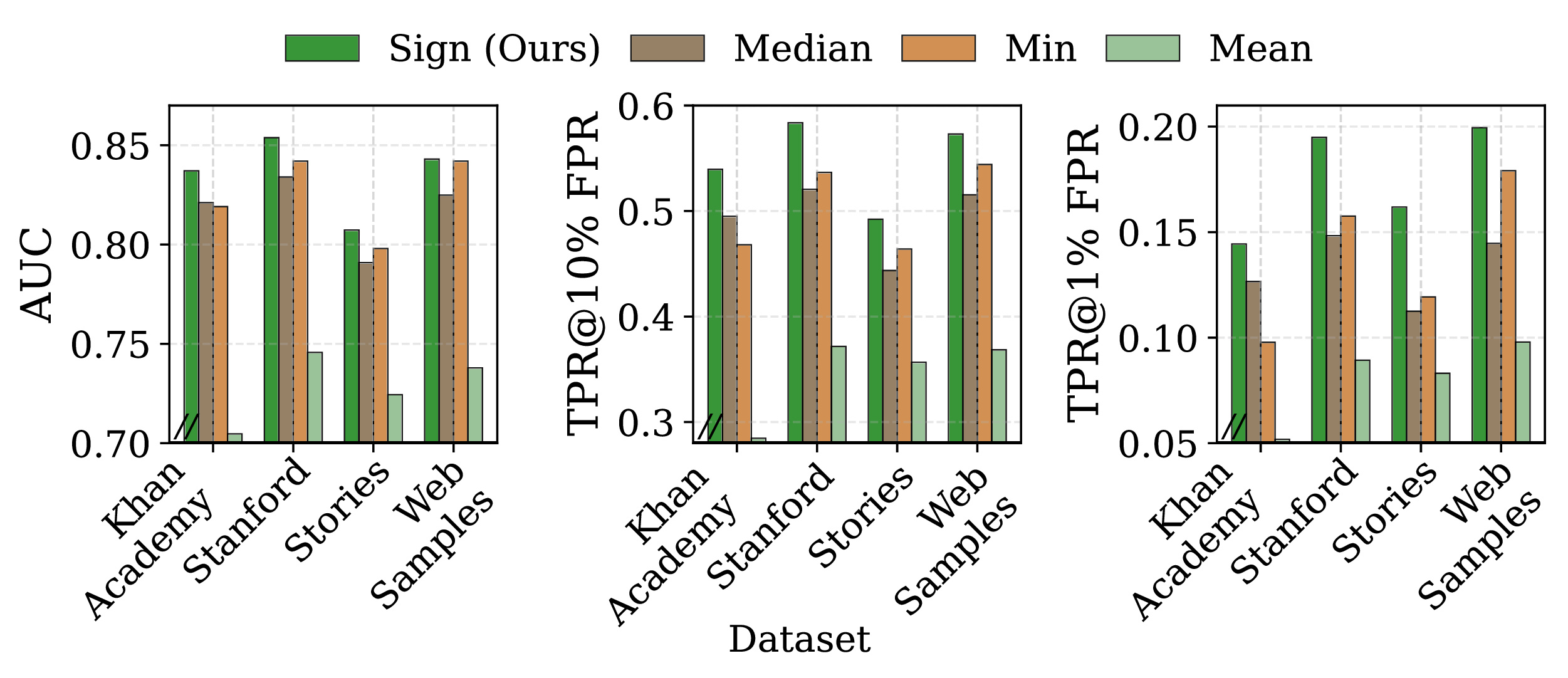

Window size matters, but ensembling removes the guesswork. Individual window sizes show a clear trade-off: optimal sizes typically fall in the 3–10 token range. But the ensemble consistently matches or exceeds the best single window, with an average improvement of 1.6% AUC and 16% in TPR@1% FPR. This means practitioners don’t need to tune window size per dataset.

Sign beats mean. Replacing sign-based aggregation with mean-based aggregation causes a 2.2$\times$ drop in TPR@1% FPR, confirming that robustness to outliers is critical in practice.

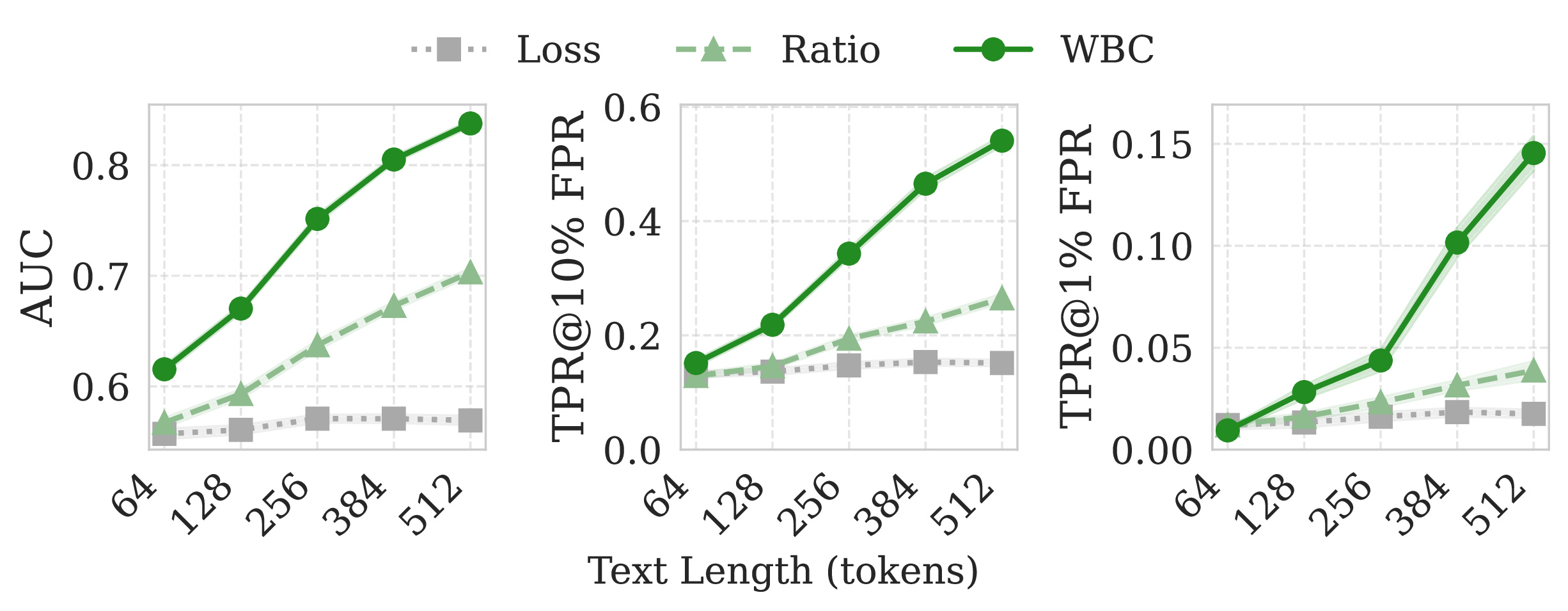

Longer text helps, and super-linearly. Scaling text from 64 to 512 tokens yields a 7.1$\times$ gain in TPR@1% FPR, compared to only 3.6$\times$ for the Ratio baseline. WBC benefits more from longer text because it extracts more independent windows.

Generalization Across Scales

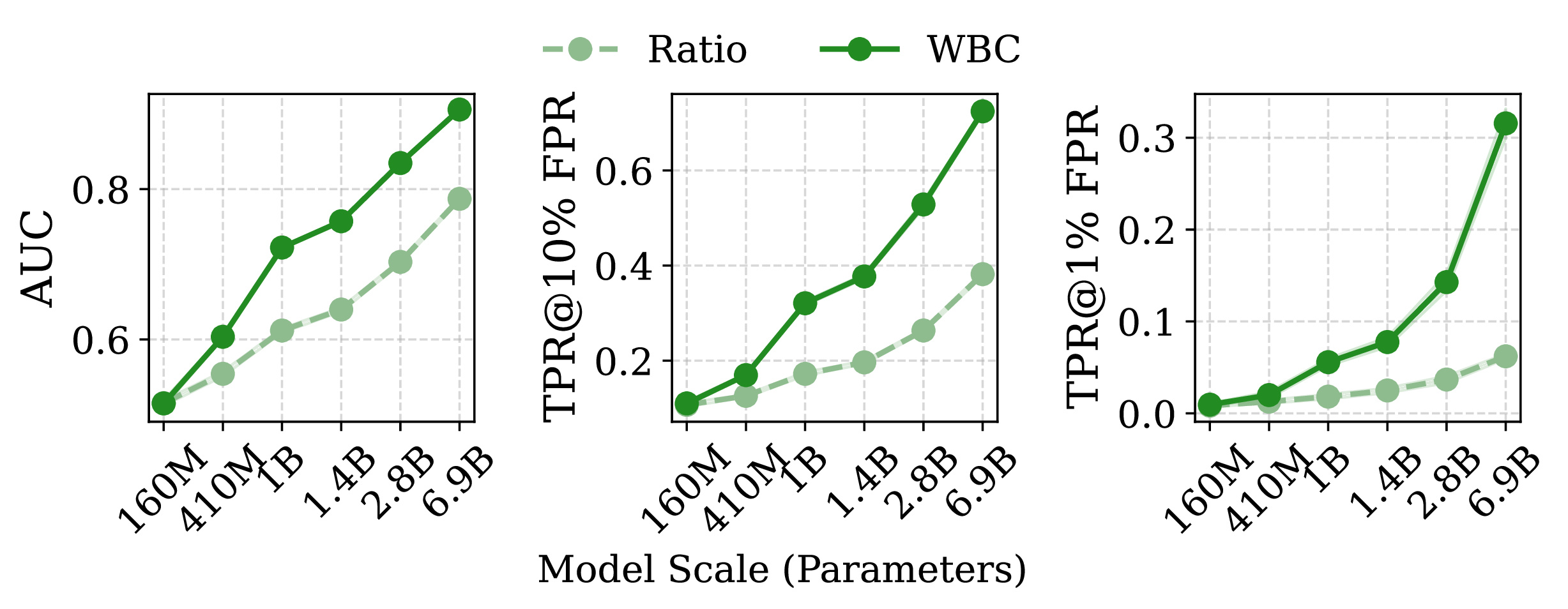

We tested WBC across the Pythia model family (160M to 6.9B parameters), as well as GPT-2, GPT-J-6B, Llama-3.2-3B, and Mamba-1.4B (a state-space model, not a transformer).

Two key findings: first, vulnerability increases with model scale. Larger models memorize more, and WBC consistently captures this. Second, WBC works across fundamentally different architectures: the same algorithm, with no modifications, is effective on both transformer-based and state-space models.

Takeaways

For privacy researchers: The global-averaging paradigm for membership inference has been leaving substantial signal on the table. Membership signals in fine-tuned LLMs are sparse, localized, and buried under heavy-tailed domain noise. Window-based analysis with robust aggregation extracts these signals far more effectively.

For LLM practitioners: If you are fine-tuning on sensitive data, be aware that the privacy risk may be higher than what existing auditing tools suggest. WBC reveals vulnerabilities that standard MIAs miss.

For the broader community: This work highlights that how we aggregate evidence matters as much as what evidence we look for. The shift from global statistics to localized comparison, and from mean-based to sign-based aggregation, applies broadly to any detection problem with sparse signals in heavy-tailed noise.

The code and all experimental artifacts are available at https://github.com/Stry233/WBC.

For more details, check out the full paper: Window-based Membership Inference Attacks Against Fine-tuned Large Language Models.