SAMA: A New Attack on Diffusion Language Models @ ICLR 2026

I am excited to share that my paper Membership Inference Attacks Against Fine-tuned Diffusion Language Models has been accepted to ICLR 2026! This work represents the first systematic investigation into the privacy vulnerabilities of Diffusion Language Models (DLMs).

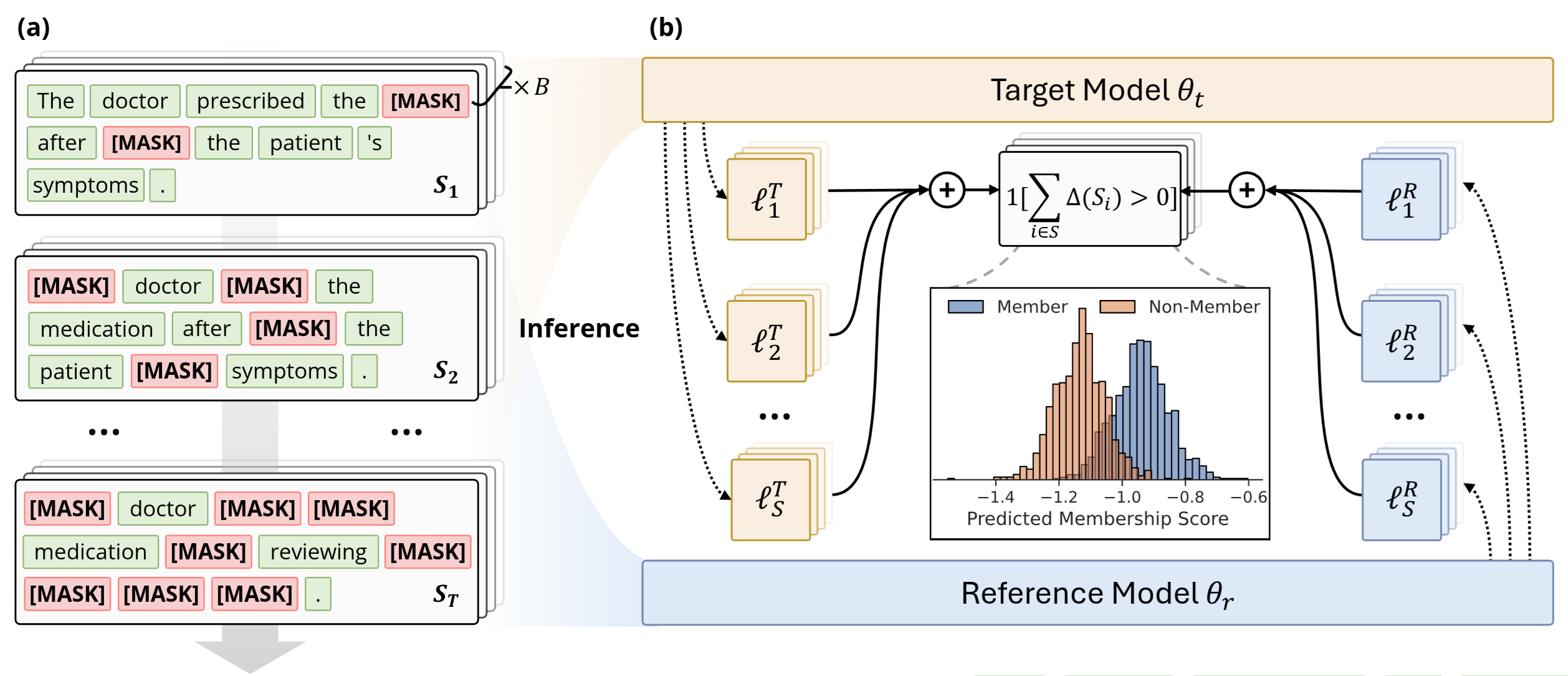

While autoregressive models have long been scrutinized for privacy risks, the emerging class of DLMs—which operate via bidirectional masked prediction—has remained largely unexplored. Our research reveals that this unique architecture introduces critical new vulnerabilities. Unlike the fixed prediction pattern of traditional models, DLMs allow for multiple maskable configurations, exponentially increasing the surface area for attacks.

To expose these risks, we developed SAMA (Subset-Aggregated Membership Attack). SAMA addresses the challenge of sparse membership signals by employing a robust aggregation strategy. By sampling masked subsets across progressive densities and utilizing sign-based statistics, SAMA effectively filters out heavy-tailed noise to isolate genuine memorization patterns. Our experiments show that SAMA achieves a 30% relative improvement in AUC over the best baselines, with up to 8x improvement at low false positive rates.

A heartfelt thank you to my collaborators Kaiyuan Zhang, Yuntao Du, and Edoardo Stoppa, as well as Charles Fleming and Ashish Kundu from Cisco Research. I am also deeply grateful for the guidance of Prof. Bruno Ribeiro and my advisor Prof. Ninghui Li.

I look forward to sharing these findings in Rio this April! Boiler Up! 🚂